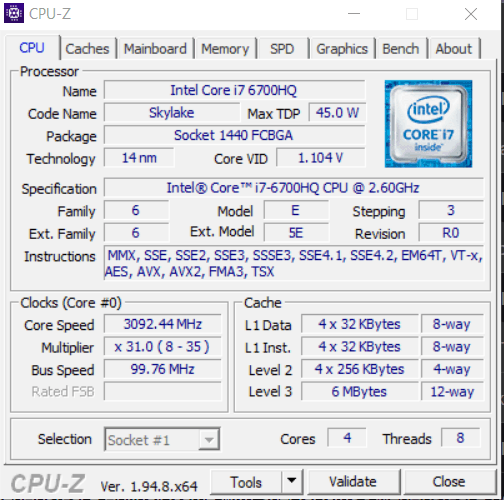

Cores pertain to the actual hardware that is responsible for executing a sequence of instructions called threads. It is the term used to refer to the central processing unit (CPU). Threads, on the other hand, refer to the sequence of instructions delivered to the core to execute commands and run applications and processes.

This article will define, discuss, and differentiate cores and threads. It will also look at the applications of cores and threads in computers and look at the evolution of single-core computers to single-core computers with hyperthreading to multi-core computers with multi-threading.

Defining Cores and Threads

The regular computing process involves fetching instruction sequences from the system’s memory, decoding the instructions, and executing the order. With the development of multi-core and multi-threading, however, the computing process has become streamlined where-in the CPU doesn’t have to complete every instruction itself.

Core refers to the actual computer hardware that executes the command. Thread refers to the sequence of instructions of a particular process that prompts the CPU with specific commands that it must execute. Essentially, threads represent the tasks that the CPU must execute. The OS scheduler handles the creation and processing of threads on a computer.

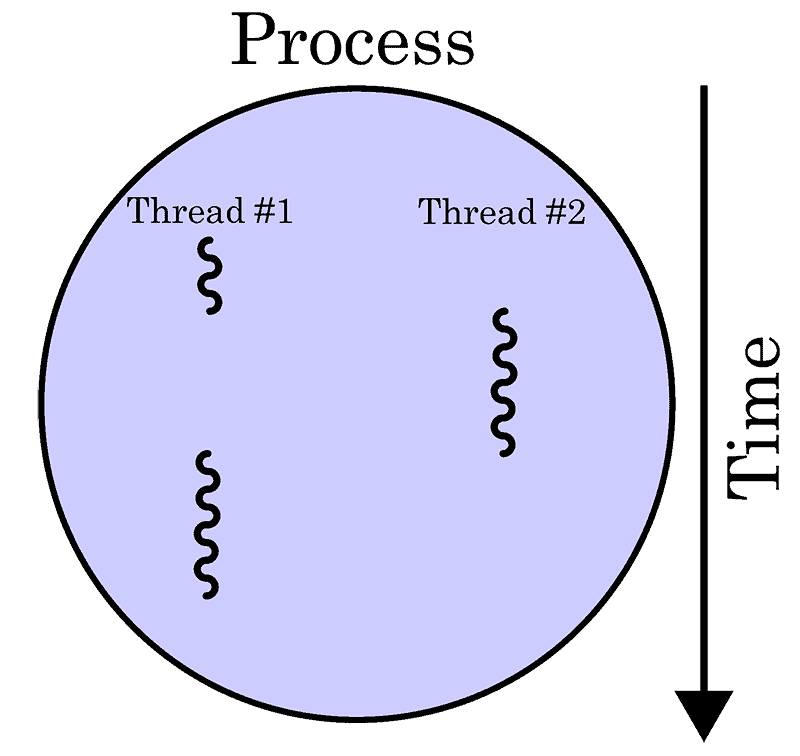

Creating a thread means starting a process. Process pertains to the specific user command that is a conglomeration of various threads. In simpler terms, the process is the final product while the threads are steps in the assembly line necessary to complete the final product.

Every process consists of at least one thread. Modern computers can divide processes into various threads and distribute these threads across the various cores. The simultaneous execution of each thread, also called parallel execution, makes the execution of the process simpler and smoother.

Thus, more threads mean more bits of a particular process executing at one time. This means that the workflow becomes more organized and processing becomes more efficient. Modern computers also have Out of Order Execution (OoOE) which works in conjunction with threads to organize and re-arrange instructions for streamlining and optimizing thread execution.

The number of threads processed at any given time is limited to the number of cores that exists within the system. Normally, each core can only process one thread at a time. Hyperthreading doubles the thread processing power of each core, allowing it process two threads at any given time.

Meanwhile, more cores in a computer system increases allows for multiple tasks to process at any given time. Having more cores means having more CPUs handling a variety of threads and processes.

Single-Core CPUs

The single-core CPU is the predecessor of the modern multi-core CPUs. While single-core CPUs did well in terms of executing tasks, it lacks the multitasking features necessary to execute more than one process at a time with ease.

Concurrency refers to the ability to run two or more tasks at a time. Single-core CPUs could not execute concurrent tasks with actual parallel execution evident in multi-core CPUs today. Switching between processes in a single-core CPU will incur context switch overhead penalty or lag/latency caused by a decrease in performance. This occurs as the single-core CPU attempts to switch context completely in executing two or more tasks.

To make up for the context switch overhead penalty, manufacturers of single-core CPUs increased clock speed. A faster clock speed, measured in GHz, means faster execution of processes. This creates the illusion that the computer was processing two tasks at a time. However, it is simply executing threads faster due to an increased clock speed.

Over time, the faster clock speed degrades the physical condition of the computer hardware faster as power consumption increases and heat accumulates within the system. Thus, it became an inefficient solution in overriding the context switch overhead penalty.

Hyperthreading

Before the rise of multi-core CPUs, Intel released a single-core CPU capable of hyperthreading in 2002. This provided a viable solution to increasing the multitasking capability of the single-core CPU.

Hyperthreading works by using the computer’s own logic to recognize two CPUs within the computer system despite having just one CPU. Thus, the computer operates using two logical cores while using the resources of just one core.

The single-core CPU with hyperthreading visibly increased the performance of the single-core computer in terms of speed of execution and ability to handle multiple applications at one time. However, the performance increase is hardly similar to the performance increase of actual additional cores in multi-core CPUs.

Multi-Core CPUs

Multi-core CPUs represent the actual increase in CPU count that hyperthreading attempted to emulate in the single-core CPU. Having a greater number of cores allows users to increase the number of applications and processes running in the background without significant drop-offs in computer speed and performance. It also improves the amount of work that can be completed at any given time.

Multi-core CPUs solved the dilemma of single-core CPUs having to increase clock speed to compensate for the context switch overhead; Multiple cores running at relatively moderate speeds in order to maintain normal temperature throughout the system. It also consumes less energy despite doubling the performance output.

Before multi-core CPUs, there were motherboards that featured two sockets to fit two CPUs. However, this caused overheating due to greater power consumption as well as latency due to the inability of the two separate CPUs to communicate seamlessly. Today, multi-core CPUs are combined into a single formfactor and inserted into a single slot.

Multi-Threading

Multi-threading is the process of using several threads to execute processes faster. This feature divides processes into various threads and runs them simultaneously across the various cores in order to reduce idle time and speed up the execution of processes. This improves the minimum response time of applications.

Multi-threading can improve the performance of all applications, from modern games to web browsing applications. While it consumes more power and can heat-up the system, it makes computer processing more efficient and dramatically increases performance. Computer systems with multi-threading support, however, require an adequate power supply to accommodate the increased energy requirement.

Multi-threading also helps to utilize the cores more efficiently, especially when doing multiple tasks like playing games and streaming; It helps reduce the likelihood of frame drops. Overall, multi-threading increases the performance of the device by up to 30%.

Multi-threading gives the illusion of a faster system because of the increase in speed in executing tasks. However, the primary process behind multi-threading that leads to an increase in performance and speed is the more efficient and streamlined execution of threads. Multi-threading performs real parallel execution of threads across various cores to enable applications to start-up and run with little to no delay.

Usually, people who do video encoding and rendering will benefit from multi-threading because it gives the computer the ability to divide the entire encoding and rendering process into various threads instead of executing the entire process as a whole. Modern games are also becoming more CPU-intensive and may require multi-threading to process resources optimally.

Image Credits:

Image by Cburnett shared under CC BY-SA 3.0